Speaking the Machine’s Language: How Nexus Websites Aces SEO in the Age of AI

The internet is currently undergoing its most radical transformation since the invention of the hyperlink. For the last two decades, SEO was largely a game of “Human Readability”—writing catchy headlines, optimizing keywords, and ensuring users stayed on the page.

But there is a second audience that visits your website every single day. They don’t have eyes, they don’t experience emotions, and they don’t “read” in the traditional sense. These visitors are the Search Bots (Googlebot, Bingbot) and, increasingly, the Large Language Models (LLMs) behind tools like ChatGPT, Perplexity, and Google’s AI Overviews.

Most agencies treat this second audience as an afterthought. They install a generic plugin, tick a few boxes, and hope for the best.

At Nexus Websites, we take a different approach. We realized that to ace modern SEO, we couldn’t rely on off-the-shelf tools that treat a local plumber the same way they treat a global e-commerce giant. We built our own proprietary engine—an intelligent Schema generator—that allows us to speak the machine’s language with native fluency.

This is the story of the “Invisible Layer” of the web, and how we engineer it to drive verified ranking increases and future-proof our clients against the AI revolution.

The Problem with “Generic” SEO

To understand why our approach works, you first have to understand the limitation of standard tools.

Most WordPress SEO plugins rely on static templates. If you create a page, they might generically label it as a WebPage or an Article. This is fine for a blog, but it is catastrophic for specialized businesses.

Imagine a high-end landscaping company. If their portfolio page is just marked as an ImageGallery, Google sees a bunch of pictures. But if that page is intelligently marked up as a LocalBusiness with specific AreaServed coordinates, OpeningHours, and PriceRange attributes, the search engine suddenly understands the context of the business.

Generic plugins often fail to make these connections. They don’t know that the “5 stars” in your footer is actually an AggregateRating, or that the phone number in your header should be explicitly linked to a CustomerService contact point.

This lack of precision leads to “flat” search results—standard blue links that get lost in the noise. We needed something that could handle complexity. We needed a tool that could look at a page, understand its DNA, and generate a verified digital passport for it.

The Nexus Protocol: A Multi-Step Transformation

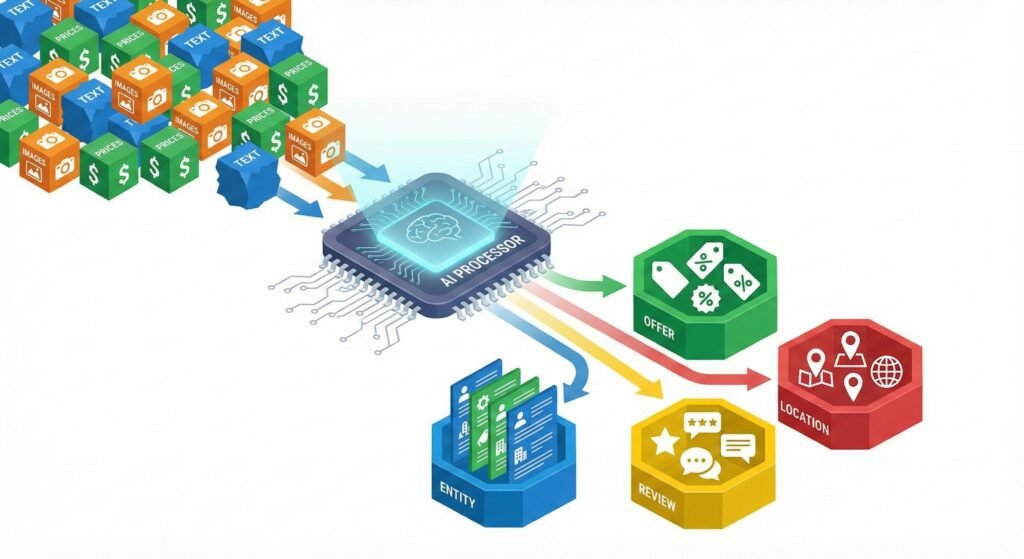

Our proprietary tool doesn’t just “add code”; it transforms content. We use an automated, multi-stage process that ensures every client page is serving the richest possible data to search engines.

Step 1: Contextual Analysis (The “Brain”)

The first step in our process is analysis. Before a single line of code is output, our system scans the raw content of the page. It looks at the “Human” content—the headlines, the images, the custom fields, and the text snippets—and determines exactly what the entity is.

Is this a Product? An Event? A Recipe? A MedicalService?

This distinction is critical. Google requires different “ingredients” for different types of content. A Product must have an offer or a price. An Event must have a start date and a location. If you miss these, you don’t get the Rich Result. Our system identifies the entity type with high precision, ensuring we never try to fit a square peg into a round hole.

Step 2: Dynamic Mapping & The “Transformer”

This is where the magic happens. Once we know what the page is, we have to bridge the gap between the website’s database and the Schema graph.

In dynamic environments (like complex e-commerce sites or service portals), data changes constantly. Prices update, stock levels fluctuate, and service areas expand. Hard-coding this information is a recipe for disaster.

Our engine uses a “Transformer” method. It hooks directly into the server-side variables of the website. When we build a client’s site, we map their internal data directly to the Schema output.

- If the client changes a price in their dashboard, the

priceproperty in theOfferschema updates instantly. - If they upload a new featured image, the

primaryImageOfPagenode automatically regenerates with the new URL.

This creates a “living” Schema layer. It is never out of date, and it never contradicts the visual content on the page—a discrepancy that Google often penalizes.

Step 3: The Strict Compliance Protocol

The most common reason Rich Snippets fail to appear is “Schema Drift”—where the code contains minor syntax errors or missing required fields.

We implemented a “Strict Compliance Protocol” within our engine. It acts as a bouncer at the door of the search results. Before the Schema is finalized, the system checks it against the official Schema.org standards and Google’s specific Rich Result mandates.

For example, if we are marking up a Product, the system enforces the inclusion of either an Offer or an AggregateRating. If a client forgets to add a price, our system detects the gap and can apply intelligent fallbacks to ensure the code remains valid, preventing the dreaded “Invalid Object” error in Search Console.

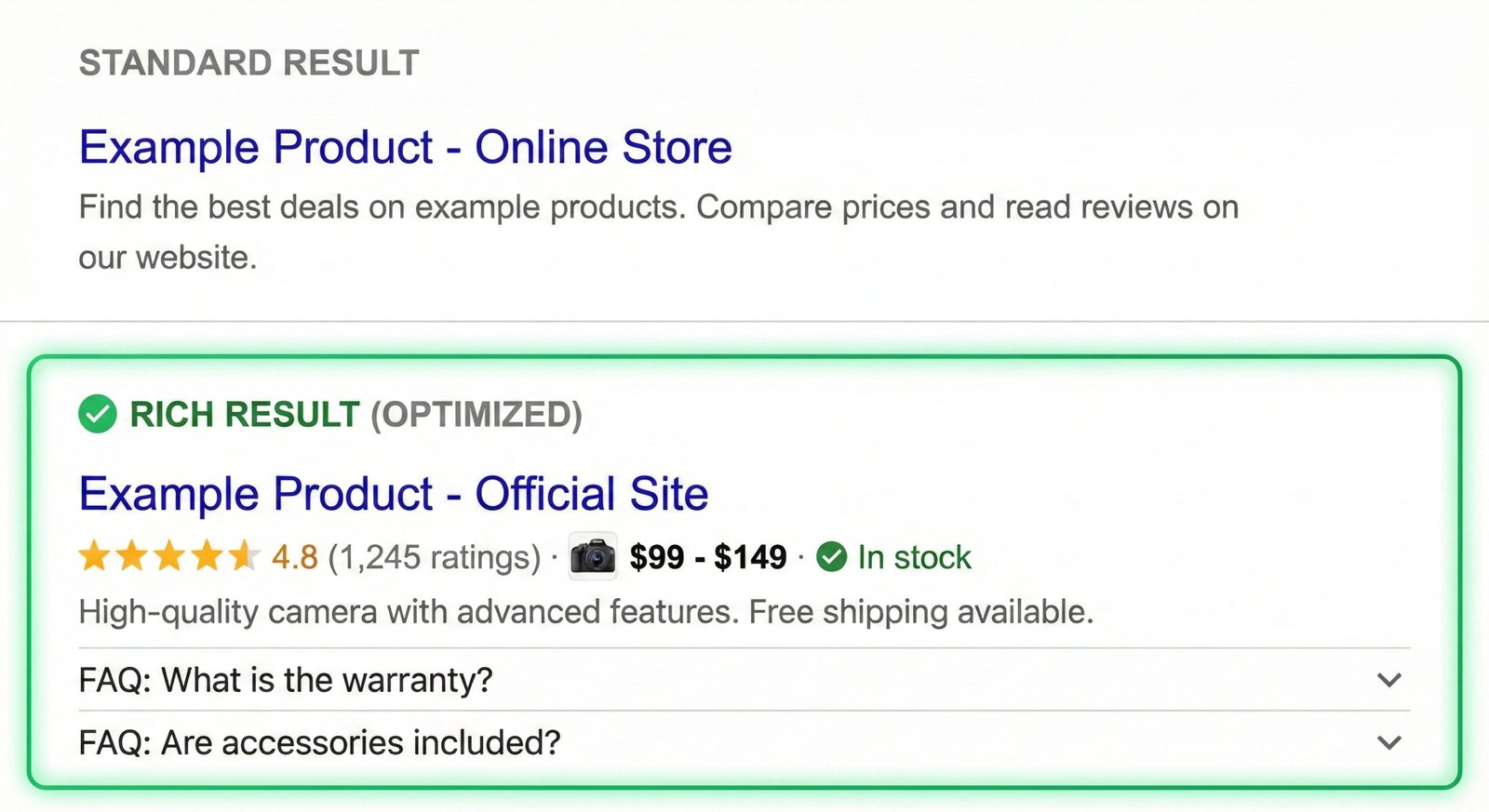

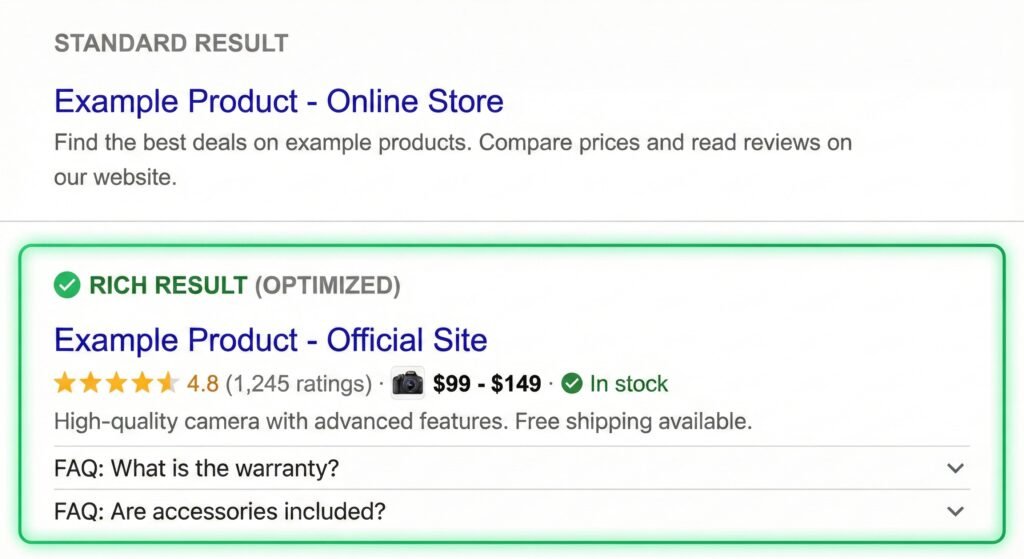

Why “Rich Snippets” Are the Holy Grail

Why do we go to all this trouble? Why write thousands of lines of code just to generate invisible JSON-LD data?

The answer is Real Estate.

In modern search results, ranking #1 is no longer enough. You need to dominate the pixel space.

- Star Ratings: Increases click-through rate (CTR) by up to 35%.

- Price Snippets: Pre-qualifies visitors (people who click already know the price).

- FAQ Dropdowns: Pushes competitors further down the screen.

- Sitelinks Search Box: Allows users to search your site directly from Google.

By utilizing our custom engine, we don’t just tell Google “this is a page.” We hand Google a silver platter containing the exact data it needs to build these beautiful, high-converting display boxes. We turn a standard search listing into a billboard.

The New Frontier: Optimizing for AI and LLMs

This is the most exciting part of our current strategy. Search is changing. We are moving from “Search Engines” (finding links) to “Answer Engines” (generating responses).

When you ask ChatGPT or Perplexity a question like “Who is the best commercial architect in London?”, the AI doesn’t just look for keywords. It looks for relationships. It looks for a Knowledge Graph.

Our Schema engine is built for this AI future. We don’t just create isolated nodes of data; we create a Connected Graph.

- We link the

LocalBusinessnode to theWebPage. - We link the

Founder(Person) to theBusiness. - We link the

AreaServedto specific geographic coordinates.

This helps LLMs “reason” about the business. It helps the AI understand that Nexus Websites is an Organization that Offers a Service in a specific Location.

The /llms.txt Advantage

Furthermore, we are one of the first agencies to implement the /llms.txt standard.

Think of robots.txt—the old file that told Google where it couldn’t go. /llms.txt is the opposite. It is a VIP invite for AI bots.

Our system automatically generates this specialized file, providing a clean, Markdown-formatted summary of the website’s most critical content, stripped of design noise. When an AI crawler visits a Nexus-optimized site, it doesn’t have to guess what the important pages are. We hand-deliver a curated list of “Key Pages” and “Content Concepts” directly to the bot’s logic center.

This drastically increases the likelihood of our clients being cited as sources in AI-generated answers. While competitors are blocking AI bots, we are feeding them high-quality, structured data.

Verified Results: The Impact on ROI

The beauty of this engineering-focused approach to SEO is that the results are verified. We don’t have to guess if it’s working.

We use the exact same validation tools that Google uses. We can see the “Green Checks” in the Rich Results Test. More importantly, we see the impact in the metrics:

- Higher Click-Through Rates: Even without moving up in position, the visual “pop” of a Rich Snippet draws more eyes.

- Lower Bounce Rates: Because the search result gave more accurate info (like price or hours), the traffic that lands on the site is more qualified.

- Voice Search Wins: Voice assistants (Siri, Alexa) rely almost entirely on Schema data to answer questions like “What time does [Business] open?”

Conclusion

At Nexus Websites, we believe that a website is more than just a digital brochure; it is a data transmission device.

While compelling copy and beautiful design are for the humans, the Schema Architecture is for the machines that decide if those humans ever find you. By building our own custom tools rather than relying on generic plugins, we ensure that our clients speak the language of the future fluently.

We don’t just optimize for the search of today. We are already optimizing for the AI-driven web of tomorrow.